1st International Workshop on

Mixed and Augmented Reality Experience Capture (XCAP’17)

at the 16th IEEE International Symposium on Mixed and Augmented Reality (ISMAR)

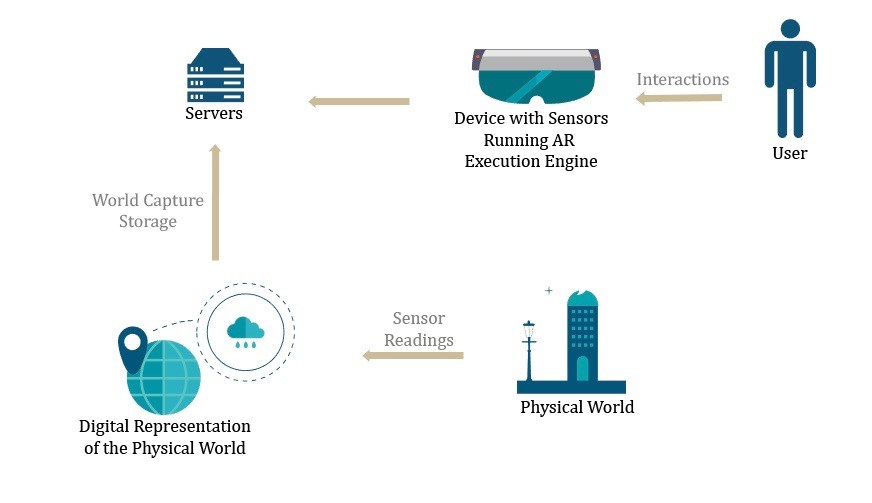

When the user of a Mixed and Augmented Reality (MAR) device is in an experience, the real world with the synchronized digital assets are perceived. The user’s senses continuously detect the visual and/or audio signals from the combination of the real world and digital assets in real time.

As Mixed and Augmented Reality experiences become more common there will be a natural need on the part of developers, users, employers and sponsors to capture and, in some circumstances to share or publish accurate archives of MAR experiences. For example:

- Workplace-based inspections, particularly those necessary to produce certification of a process being performed in compliance with corporate or regulatory policies, would be captured.

- A public safety officer using MAR to aid in posing questions when interviewing a suspect or witness may need to review the interview archive at a later time.

- Those seeking to capture tribal knowledge or best practices may also use an MAR experience capture system for sharing with novice users or students in the context of skills training or performance enhancement.

- The players of a MAR game may want to record, review or share their best moves.

The captured experience file will show the real world and digital content from the user’s point of view.

To support these capabilities, systems connected to the MAR display and sensors, and a variety of recording and file storage technologies are needed. Today, some preliminary tools and architectures are proposed.

The first available are designed to work with HoloLens, based on prior work published by Microsoft Research (see resources for developers). The field as a whole is very young and requires a great deal of further research.

WORKSHOP TOPICS

This workshop focuses on approaches and system architectures for content creation and management. Unlike digital content perceived by the user within MAR display devices during experience presentation, the content of concern to the participants of this workshop is linear, time-stamped content that can be captured and then played/reviewed and otherwise treated as “normal” digital video content, at any time in the future. This includes the possibility of multiple points of view (multiple cameras) and multiple microphones. It can also include other metadata about the user’s geospatial position and orientation, hand positions, gaze direction, audio provided the user, photos taken or video viewed and captured. It encompasses the media files in any/all formats and associated metadata.

The topics and questions on which this workshop will focus include:

- Components and/or systems, and architectures for MAR Experience streaming and capture

- Design, selection and integration of sensors for MAR experience capture

- Local power and processor management during MAR experience capture

- Compression during or following MAR experience capture

- MAR experience capture metadata (e.g., session dates, times, duration, geospatial position and orientation, hand positions, gaze direction, audio provided the user, photos taken or video viewed and captured)

- Novel visual interactions with archives of captured MAR experiences

- Network architectures for MAR experience capture and transport

- Components and/or systems for MAR Experience archive storage, replication, management and access

- Benefits and drawbacks of distributed architectures for MAR experience capture and management

- Policies and guidelines for MAR experience capture and management

ORGANISERS

Chairs:

- Christine Perey, PEREY Research & Consulting, Switzerland

- Fridolin Wild, Oxford Brookes University, UK

- Patrick La Collet, Polytech Nantes/Université de Nantes, France

Programme Committee:

- Mikhail Fominykh, Independent, Norway

- Kaj Helin, VTT, Finland

- Ralf Klamma, RWTH Aachen, Germany

- Roland Klemke, Open University, Netherlands

- Carl Smith, Ravensbourne University, UK

- Carlo Vizzi, Altec, Italy

- Alla Vovk, Oxford Brookes University, UK

SUBMISSION

This workshop will feature presentations describing current or past research, design, practice or lessons learned about Mixed and Augmented Reality experience capture and the topics of this workshop.

Authors are invited to submit papers by way of EasyChair (conference ID is XCAP17). Contributions must include at least one paper and can be accompanied by links to downloadable files containing supplementary materials.

Workshop papers should be 2-4 pages in length, submitted in PDF following the ISMAR 2017 guidelines (these may change so check back shortly before submitting your paper) and formatted using the ISMAR 2017 paper templates provided on the conference submissions guideline page.

Submissions should not be anonymized and the author names and affiliations should be displayed on the first page. At least one author of each accepted paper must attend the workshop and register for at least one day of the conference.

All accepted papers will be published in the ISMAR 2017 Adjunct Proceedings and IEEE Xplore.

IMPORTANT DATES

- Draft paper submission to program committee:

July 3, 2017 - Notification of acceptance and feedback from committee reviewers:

August 7, 2017 - Camera-ready version

August 28, 2017

Leave a Reply